Governance of AI systems is no longer optional. ISO 42001 provides a recognised certification framework.

Speeki takes a practical approach to AI governance.

We build our own AI tools and solutions, so we know first-hand the challenges, the opportunities and the risks.

Our history with AI and technology

Scott Lane brings nearly two decades of specialised experience to Speeki’s ISO 42001 AI management system services.

With a background in undergraduate software engineering and experience at one of the world’s largest IT companies, Scott has worked with technology throughout his career. Since 2020, software development at Speeki has been almost entirely AI-based.

Speeki initially built solutions using IBM Watson AI and now develops agentic AI systems. The team works with AI daily, both as users and builders. Speeki has also developed its own AI governance models using ISO 42001 as the reference standard.

Unlike many certification bodies, Speeki has qualified auditors in house with direct experience in AI governance. We also maintain a strong internal resource base and use our own AI agents to support assessment and delivery.

Speeki’s added value

ISO 42001 certification combined with AI-driven software to manage your AI management system in line with ISO 42001

ISO 42001 certification process explained

-

Beginning your ISO 42001 AI management system work starts with understanding where AI operates within your organisation and how those systems are currently governed.

The first step is a comprehensive AI inventory and gap analysis. This identifies all AI systems in use, whether developed internally, sourced from vendors or embedded in third-party services, and assesses existing governance practices against ISO 42001 requirements.

This initial assessment often reveals AI systems operating without sufficient oversight, unclear accountability for AI-driven decisions, limited risk assessment, weak data governance, inadequate transparency and insufficient monitoring of system performance and impacts. Many organisations discover AI being used across departments without central visibility, creating unmanaged risks and compliance gaps.

ISO 42001 provides a structured framework to move from ad hoc AI adoption to responsible and governed deployment. It supports consistent management of technical, ethical, legal and business risks across the full AI lifecycle, from design and development through deployment, monitoring and retirement.

Organisations typically adopt ISO 42001 to respond to emerging AI regulation, manage liability associated with AI decisions, build trust with stakeholders and demonstrate leadership in responsible AI use. Certification signals governance maturity and a proactive approach to AI risk management.

Most organisations complete the certification process within six to twelve months, depending on AI system complexity, existing governance maturity and organisational scale. The outcomes include reduced regulatory and legal risk, improved stakeholder confidence, stronger competitive positioning and the ability to deploy AI more effectively within a clear governance structure.

-

Implementing ISO 42001 effectively requires cross-functional expertise that spans technical AI knowledge, risk management, governance frameworks and emerging regulatory requirements. These capabilities are rarely concentrated in a single function.

Data scientists, AI engineers, legal counsel, compliance teams, risk managers, product owners and senior leaders all play important roles in AI governance. In practice, they often lack a shared understanding of how to assess AI risks, apply controls across the AI lifecycle, ensure transparency and explainability, manage third-party AI systems and maintain effective oversight.

Speeki’s intensive two-day and three-day ISO 42001 training courses are designed to build this shared understanding. The courses walk through each requirement of the standard using practical examples drawn from a wide range of AI use cases, including customer-facing chatbots, predictive analytics, automated decision systems and generative AI.

Participants learn how to conduct AI impact assessments, apply risk-based controls at each lifecycle stage, establish governance and oversight structures, manage AI supply chains, address data quality and provenance, implement transparency mechanisms and build the documentation needed for assessment.

The three-day course includes additional modules covering AI ethics frameworks, regulatory developments such as the EU AI Act and preparation for ISO 42001 assessments.

Delivered on site or remotely, the training creates a common language between technical and non-technical stakeholders. This accelerates implementation, reduces reliance on external advisers and strengthens internal AI governance capability across the organisation.

-

A core principle of ISO 42001 is that AI governance must be proportionate to the risks posed by each AI system. Not all systems require the same level of oversight. Excessive controls can limit innovation, while insufficient governance increases the likelihood of harm.

ISO 42001 requires a risk-based approach across the AI lifecycle. The level of governance, control design, testing and monitoring should reflect factors such as potential harm, regulatory exposure, decision impact and deployment context. For example, a product recommendation engine presents very different risks from an AI system used for credit decisions, medical diagnosis or autonomous control. Each can be governed effectively by aligning controls to its risk profile.

This approach starts with structured AI risk assessments. These should consider potential impacts such as discrimination, safety failures, privacy breaches and security vulnerabilities, along with affected stakeholder groups, regulatory classification, reversibility of decisions and the consequences of system failure.

Higher-risk AI systems require stronger controls, including rigorous development practices, extensive testing and validation, defined human oversight, comprehensive documentation, continuous monitoring and senior-level governance. Lower-risk applications can be managed with proportionate controls that support faster deployment while maintaining accountability.

The same principle applies to third-party AI. Systems used for high-impact decisions require deeper due diligence, contractual safeguards and ongoing oversight, while lower-risk tools can be managed through standard vendor controls.

Organisations that apply this risk-based discipline avoid both excessive governance that slows innovation and weak oversight that leads to serious failures. Regular risk reassessment ensures the AI management system remains effective as technology evolves, regulations develop and new risks emerge, keeping governance aligned with both operational realities and business objectives.

-

The difference between successful ISO 42001 certification and problematic audit outcomes usually reflects how thoroughly AI systems are documented and how well governance processes are tested before external assessment.

Organisations often spend months establishing AI governance frameworks, only to identify gaps during audits. Common issues include incomplete AI inventories, risk assessments without sufficient technical depth, weak documentation of decision logic, poor data governance for training datasets, limited testing evidence and governance oversight that lacks meaningful engagement with AI risks.

Speeki’s pre-certification services are designed to identify and address these issues early.

A comprehensive gap analysis reviews the AI management system against all ISO 42001 requirements, highlighting undocumented AI systems, incomplete risk assessments, missing lifecycle controls, weak third-party AI governance and documentation gaps that could lead to non-conformities.

This is followed by mock audits that mirror the certification process. These include interviews with AI developers and governance personnel, review of AI system documentation and risk assessments, examination of data governance controls, testing of transparency mechanisms and assessment of evidence in the same way an auditor would.

The process identifies not only technical compliance gaps but also operational readiness issues. These can include teams that cannot clearly explain governance requirements, risk assessments that lack business context, controls that are documented but not applied in development workflows and governance bodies that review information without effective oversight.

Detailed findings and targeted remediation guidance allow organisations to strengthen their AI management system before formal assessment. For organisations with complex AI portfolios, extensive use of third-party AI or limited governance maturity, this preparation supports smoother certification and stronger responsible AI capability in practice.

-

The final weeks before an ISO 42001 certification audit require careful organisation of documentation and alignment between technical and governance teams.

All AI management system documentation should be centrally organised and readily accessible. Auditors will review the AI system inventory, system-specific risk assessments, development and deployment controls, data governance documentation, testing and validation evidence, monitoring records, governance meeting minutes and incident response procedures. Delays or gaps can indicate weak governance or insufficient oversight.

Prepare a master matrix linking each AI system to its risk classification, applicable controls and supporting evidence. This helps demonstrate how governance is applied consistently across the AI portfolio.

Interview planning is also important. Select participants who understand both technical and governance aspects of AI. This typically includes AI developers and data scientists who can explain system design and training, product owners who understand business context and deployment decisions, risk managers responsible for impact assessments, legal or compliance staff addressing regulatory obligations and governance leaders providing oversight.

Audit logistics should be planned in advance. Arrange suitable meeting facilities, ensure access to relevant documentation and repositories where appropriate, prepare demonstrations of higher-risk AI systems if useful and confirm the availability of key personnel throughout the audit period.

Brief all participants on what to expect. Auditors will examine AI decision logic, test understanding of risks and mitigation measures, assess whether governance is applied throughout the AI lifecycle and verify that documented processes reflect actual practice.

Technical accuracy combined with effective governance matters more than perfection. Auditors expect to identify opportunities for improvement and place greater value on organisations that demonstrate genuine commitment to responsible AI. Well-prepared audits typically run efficiently and are usually completed within two to four days, depending on the size and complexity of the AI system portfolio.

-

ISO 42001 certification follows a structured two-stage audit process that typically spans four to eight weeks from initial assessment to certificate issuance.

Stage 1, the documentation review, usually takes one to three days depending on the number and complexity of AI systems in scope, organisational size and AI governance maturity. Auditors review the AI management system documentation, including the AI inventory, risk assessment methodology and results, policies and procedures, governance structures, lifecycle controls, data governance arrangements and transparency mechanisms. The purpose is to confirm that the system design meets ISO 42001 requirements and that the organisation is ready for operational assessment.

A Stage 1 report identifies documentation gaps, unclear governance arrangements or missing controls that must be addressed before progressing. Most organisations require two to four weeks to close these findings and demonstrate readiness for Stage 2.

Stage 2 is the main certification audit and typically lasts several days, depending on the complexity of the AI portfolio. It includes interviews with technical and governance teams, review of AI system documentation, validation of risk assessments, testing of control effectiveness, verification of data governance and examination of governance oversight. Auditors may request technical demonstrations, review training data documentation, examine testing evidence and assess monitoring processes.

Following Stage 2, the certification body completes a technical review and certification committee approval, which usually takes a further two to three weeks before the certificate is issued.

Once certified, organisations undergo annual surveillance audits and a full recertification audit every three years.

From initial implementation to certification, most organisations take between eight and fifteen months. The timeline is influenced by AI system complexity, existing governance maturity and whether AI governance is being established from scratch or built on existing frameworks. Understanding this timeline supports effective planning, realistic expectations and phased deployment of AI systems under an increasingly mature governance structure.

-

While ISO 42001 implementation consulting must be delivered by independent firms to preserve certification integrity, Speeki supports your AI management system through focused training and technology.

Speeki’s two-day and three-day ISO 42001 training courses build internal capability to understand, interpret and apply the standard within real AI development and deployment environments. The training is designed for data scientists, AI engineers, product managers, legal and compliance teams and governance leaders, creating a shared understanding of AI governance principles and practical implementation.

Training covers AI risk assessment methods, lifecycle controls from development through deployment and monitoring, data governance for AI systems, transparency and explainability, third-party AI management, emerging regulatory requirements including the EU AI Act and governance structures for effective oversight. Courses can be delivered on site or remotely to ensure consistent understanding across teams.

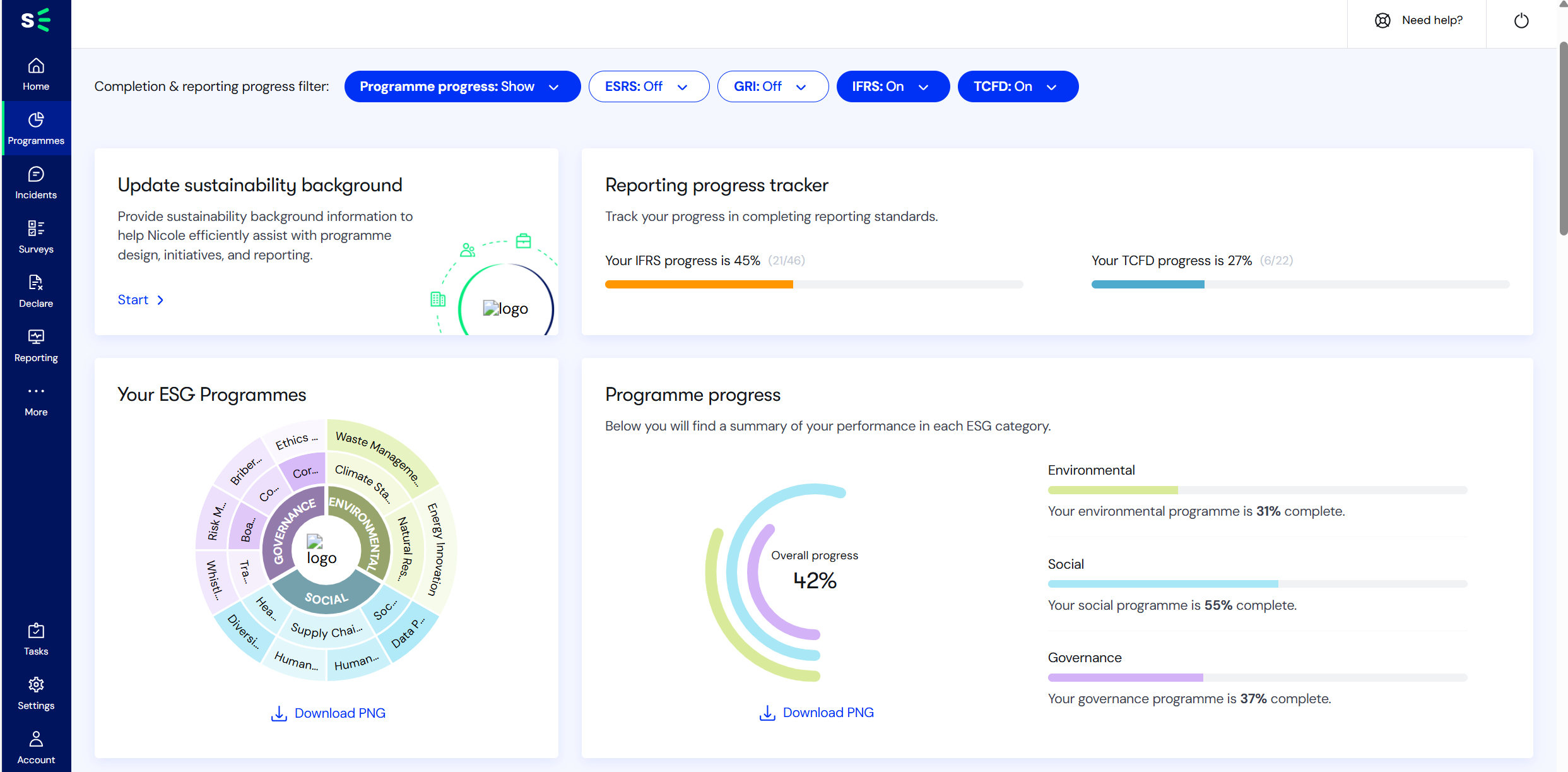

Beyond training, Speeki’s Engage technology platform supports efficient implementation of ISO 42001. The platform maintains AI system inventories with lifecycle tracking, supports AI risk assessments, centralises documentation, tracks control implementation, manages third-party AI relationships and records monitoring and incidents. Governance dashboards provide oversight and support evidence requirements for assessment while reducing administrative effort.

Together, training and technology provide a practical foundation for building and maintaining an effective ISO 42001 AI management system. This allows organisations to innovate responsibly, while working with their chosen implementation partners on strategy, controls and organisational integration.

-

ISO 42001 certification costs follow a standard assessment methodology applied by accredited certification bodies, providing consistency in how audit duration is calculated. While daily auditor rates vary by certification body, auditor expertise in AI governance and geographic region, the number of audit days is determined using common ISO criteria.

Audit duration is based on organisational size, the number and complexity of AI systems within scope, AI development models (internal, third-party or hybrid), the number of personnel involved in AI governance and the geographic spread of AI operations. A single-site organisation with a small number of low-complexity AI systems may require three to four audit days across Stage 1 and Stage 2. Larger organisations with complex AI portfolios, high-risk applications, internal development teams and international deployments may require eight to twelve or more audit days.

In addition to audit fees, organisations should plan for implementation-related costs. These may include specialised training for technical and governance teams, legal review of AI governance arrangements, technical support for AI risk assessment and technology platforms to manage AI inventories, risk assessments and lifecycle documentation.

Ongoing costs typically include annual surveillance audits, usually one to two days, and a full recertification audit every three years.

Total first-year investment varies depending on AI portfolio complexity and existing governance maturity, with ongoing annual costs generally lower. Requesting a detailed quotation allows certification bodies to assess your specific AI environment and provide an accurate estimate based on audit scope and duration.

Want to learn more about how to build an AI management system (AIMS) according to ISO 42001?

Explore our insights to understand the role of the standard and how it should be implemented.

Six key reasons to get certified

1. Build better AI governance systems.

4. Reduce liklihood of governance breaches.

2. Improve oversight of suppliers to your AI systems.

5. Improve reputation, integrity and trust in your AI systems.

3. Meet customer requirements for your AI-powered products.

6. Meet increasing legal requirements and board requirements.

Need technology to implement your AI management system and reduce administrative burden by more than 60%?

Speeki offers an AI-powered platform called Engage®, available to clients.

Speeki Engage is designed to align with ISO 42001’s AI governance framework, providing an integrated digital system that maps directly to the requirements of the standard.

The platform replaces fragmented, manual AI governance approaches, such as AI inventories in spreadsheets, risk assessments in documents, controls spread across development tools and monitoring held in separate dashboards. Engage brings these elements together into a single system covering AI inventory, risk assessment, lifecycle controls, data governance, testing evidence, monitoring and governance oversight.

Rather than relying on disconnected systems that create governance gaps and weak audit trails, Engage presents a coherent AI management system. Each AI system links to its risk assessment, applicable controls, implementation evidence and ongoing monitoring data. This structure simplifies certification and ongoing governance by providing clear visibility for technical teams, governance bodies and auditors.

During ISO 42001 audits, assessors can review AI governance documentation efficiently, supporting structured assessment and demonstrating governance maturity. The AI inventory tracks systems developed internally, procured from vendors or embedded in third-party services, ensuring consistent coverage across the AI portfolio.

Most importantly, the platform supports an always audit-ready approach where ISO 42001 certification reflects an operating AI management system that enables responsible innovation at scale, rather than governance processes that slow adoption.

Want to learn more about implementing an AI management system and achieving certification?

Check out the Speeki Academy.

Gain an integrated certification by bundling multiple projects to save time and cost.

One audit team. One coordinated project.